Multimodal Interaction for Robotics and AI

MIRAI is an architecture for human–robot interaction that allows operators to communicate intent to robotic systems through natural interaction modalities. Instead of relying on traditional teach pendants or manual programming, MIRAI enables humans to instruct robots using speech, gestures, and spatial references.

The system combines extended reality interfaces, artificial intelligence, and robotic control frameworks to translate human instructions into executable robotic actions. In this way, the human remains an active supervisory component in the control loop, guiding autonomous behavior and intervening when necessary.

Motivation

Industrial robots are typically programmed through structured workflows that require expert knowledge and significant setup time. While these approaches are effective for repetitive tasks, they limit flexibility when robots must operate in dynamic environments or collaborate with humans.

At the same time, recent advances in artificial intelligence have made it possible to interpret natural language and contextual information. This opens the possibility for new forms of interaction in which humans communicate intent at a higher level while robotic systems handle execution.

MIRAI explores this interaction paradigm by integrating AI-based reasoning with spatial interfaces that allow humans to express instructions directly within the working environment.

System Architecture

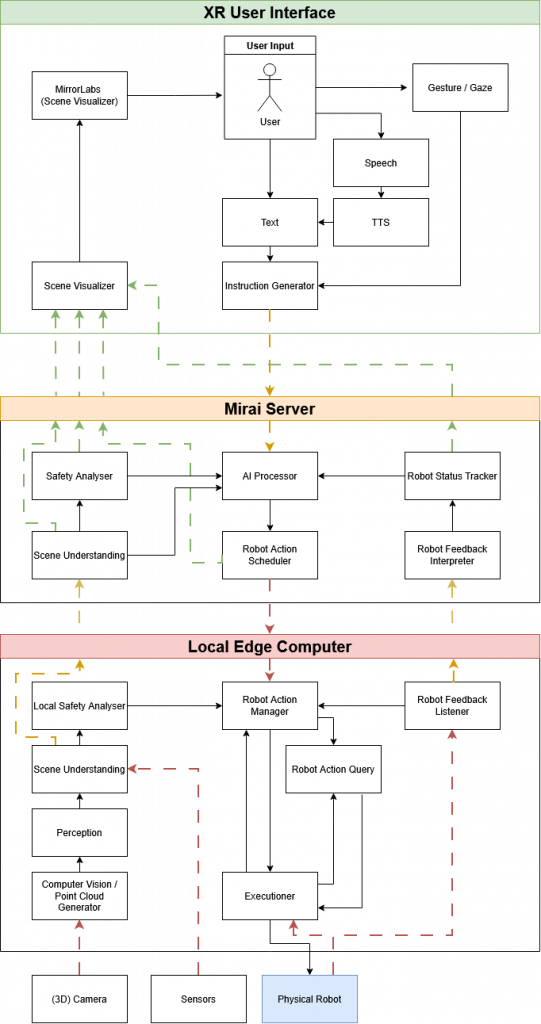

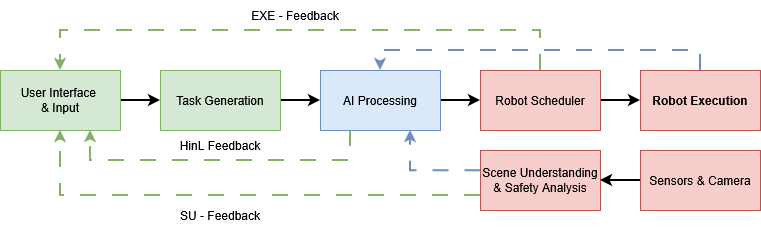

The MIRAI architecture connects three primary domains: human interaction, intent interpretation, and robotic execution.

Human input is captured through speech, gestures, and spatial interaction within an extended reality interface. These inputs are interpreted by AI-based reasoning modules that translate the instructions into structured task representations.

The resulting tasks are executed through robotics middleware such as ROS, enabling integration with a wide range of robotic platforms. Throughout the process, the human operator maintains situational awareness through visual feedback and can intervene or refine instructions when needed.

Interaction Concept

A typical interaction scenario involves an operator observing a robotic workspace through an XR interface and providing instructions using natural language and spatial references. For example, the operator might indicate a location in the environment while instructing the robot to move objects from a conveyor belt to a designated storage area.

The AI interpretation layer converts these instructions into structured commands that can be executed by the robot. The system continuously provides feedback to the operator, allowing adjustments or corrections during execution.

This approach allows humans to guide robotic systems without requiring detailed knowledge of robot programming or kinematic configuration.

Current Work

MIRAI is currently being developed as part of ongoing research into multimodal robot interaction and AI-assisted robotic systems. The architecture also serves as a platform for exploring future robotics interfaces in which humans supervise and guide increasingly autonomous robotic behavior.

First Signs of Life

Human-in-the-Loop Planning with Mirai

This early prototype shows Mirai’s human-in-the-loop interaction concept. Instead of executing AI-generated robot actions immediately, the system first creates a holographic preview of the planned robot behavior in the XR environment (in this case in the Unity3D Editor).

The user can inspect the proposed trajectory and task interpretation, analyze the result, and decide whether to approve execution or reject it with annotations that trigger a new reasoning cycle.

This approach allows AI to generate robot behavior while keeping the human as the final supervisory decision-maker.

Technology Stack

The MIRAI architecture combines several technologies to enable this interaction paradigm. The system integrates Unity-based simulation and visualization environments with ROS-based robotic control pipelines. Artificial intelligence components interpret natural language instructions and contextual information, while computer vision and sensor integration provide awareness of the physical workspace.

Together, these components form a flexible platform for experimenting with new forms of human–robot interaction.

Unity3D

Real-time simulation and visualization of robotic environments.

ROS

Robotics middleware enabling communication and control of robotic systems.

Computer Vision

Perception of the environment through cameras and sensor data.

Large Language Models & AI

Interpretation of human intent and high-level task reasoning.

XR Interfaces

Spatial interfaces allowing humans to interact with robotic systems.

Robotics & Control

Execution of tasks through robotic kinematics and control pipelines.